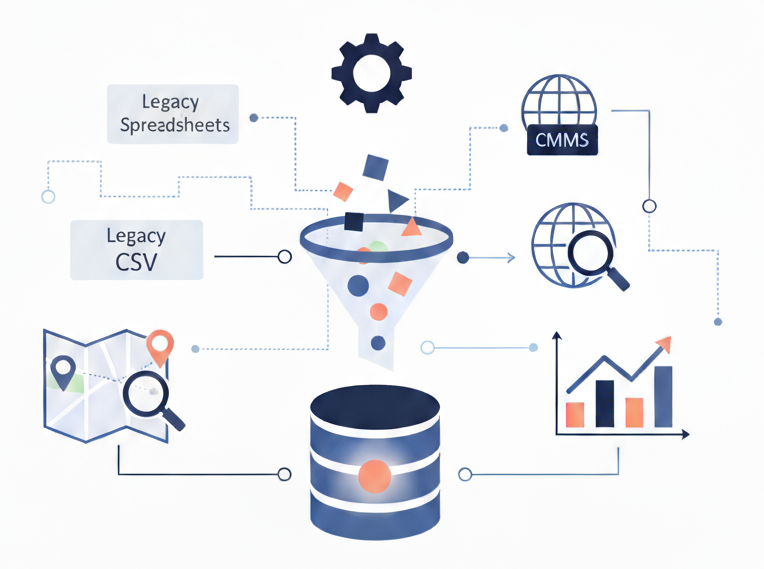

Clean & Digitize

Historical Data

Utilizes imputation, outlier detection, categorical encoding & normalization techniques to produce model-ready, spatialized datasets.

Outcomes & ROI

Connect Teams

Bridge field and office with a shared visual view of system’s historical performance

Improve Accuracy

Strengthen modeling accuracy through clean, validated historical data

See Clearly

Gain clear insight into system performance before modeling even begins

At a Glance

We turn scattered records into analysis-ready datasets. That includes schema mapping, geocoding historical breaks, reconciling IDs and documenting the assumptions so your teams can trust the results.

- Standardize formats and schemas across legacy spreadsheets, CMMS and GIS.

- Geocode historical failure records into GIS-compatible coordinates.

- De-duplicate, normalize and document data lineage.

- Produce a “single source of truth” ready for analytics and reporting.

- Validate data against asset maps and correct inconsistencies.

Designed For

Systems with years of historical records spread across spreadsheets and PDFs, paper-based or disconnected files that make it hard to visualize failure clusters and analyze patterns.

Implementation

Rapid data audit followed by an agreed cleanup/geo-enrichment sprint.

Data Cleaning & Digitization

4–6 weeks